Apocalypse Now

A cyberethnographer responds to AI doomsday predictions

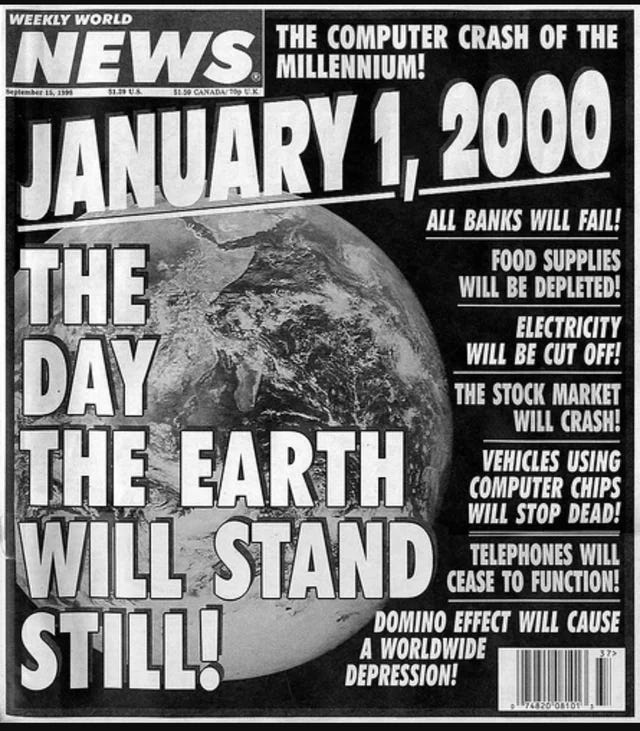

We’re obsessed with the apocalypse, and we have been for a long time. Since 66 CE a millenarian obsession has lurked within mankind. Take a look at this list from Wikipedia of all the times the apocalypse was predicted. I invite you to consider how many times it has actually happened. I’m writing this, aren’t I? We’re still here, aren’t we? And thus, wh…